TL;DR

The Eight Core Principles treat AI governance as a living system that must contain its own contradictions. The EU AI Act treats it as a classification problem solved through tiered risk buckets. This analysis maps where the two frameworks align, where they conflict, and what the gaps reveal about regulating technology whose defining characteristic is that it changes faster than legislation can follow.

A Comparative Analysis of Philosophical Governance and Regulatory Reality

The Fundamental Difference in Architecture

The Eight Core Principles and the EU AI Act are trying to solve the same problem from opposite directions, and this creates friction that matters.

The Eight Principles operate as a philosophical governance framework: self-correcting, paradox-aware, and designed to evolve. They treat AI governance as a living system that must contain its own contradictions. The EU AI Act operates as a legislative compliance framework: categorical, enforceable, and designed to create legal certainty. It treats AI governance as a classification problem that can be solved through tiered risk buckets.

Both approaches have merit. But the places where they conflict reveal structural weaknesses in the EU’s approach that may produce outcomes the regulation’s authors didn’t intend.

Key Term: General-Purpose AI (GPAI)

Throughout this analysis, “GPAI” refers to General-Purpose AI models as defined by the EU AI Act. These are AI systems trained on broad data at scale that can perform a wide range of distinct tasks regardless of how they are placed on the market. Large language models (ChatGPT, Claude, Gemini, etc.) are the most visible examples, but the category covers any foundation model capable of being integrated into diverse downstream applications. The EU created a parallel regulatory track for GPAI: baseline transparency and documentation obligations for all providers (Article 53), with additional requirements for models posing “systemic risk” based on computing power thresholds or Commission designation (Article 51). GPAI obligations have been enforceable since August 2025.

This category matters because it exposes the central tension in the EU’s risk classification approach. A GPAI model is not inherently “high-risk” or “minimal-risk.” It becomes one or the other depending on what someone does with it. The EU regulates GPAI through provider obligations and downstream use-case classification rather than through the model’s actual capabilities or behavioural patterns. That distinction, regulating what AI is used for rather than what AI can do, runs through every conflict between the Eight Principles and the EU Act.

Where the Frameworks Conflict

1. Transparency: Layered vs. Binary

Principle 1 (Transparency & the Unseen Observer) recognises that transparency itself changes what it observes. It proposes layered transparency, where core ethics remain visible while sensitive mechanisms stay protected, with time-based disclosure phases. This is a sophisticated position: full transparency creates exploitation vectors while opacity destroys trust.

The EU AI Act treats transparency as a compliance checkbox. High-risk systems require technical documentation, logging, and information provision to deployers. GPAI providers must publish training data summaries and copyright-related information. Limited-risk systems need user disclosure. Minimal-risk systems need nothing.

The conflict: The EU’s approach assumes transparency is a binary state that can be mandated at the right “level.” But Principle 1 identifies something the regulation misses entirely: making an AI system’s decision-making fully transparent to satisfy regulators simultaneously makes those decision pathways visible to adversarial actors. The Act has no mechanism for the paradox that regulatory transparency requirements can increase the attack surface of the systems being regulated.

2. Control & Autonomy: Guidance vs. Domination

Principle 2 (Control & the Paradox of Autonomy) argues that genuine control requires granting AI systems structured autonomy within ethical constraints. Governance should be guidance, not domination.

The EU AI Act mandates human oversight for high-risk systems (Article 14), requiring that systems be designed so humans can effectively oversee their operation. The Act’s entire architecture assumes that more human control equals more safety.

The conflict: The Eight Principles identify something the EU regulation doesn’t account for: excessive human control can prevent AI systems from self-correcting in real time. The Act assumes a human-in-the-loop is always beneficial, but in practice, mandatory human oversight for every high-risk decision can create bottlenecks that make systems less safe through delayed medical diagnostics or slowed infrastructure responses. It also creates “rubber-stamp” oversight where humans approve AI outputs reflexively because the volume of decisions requiring review overwhelms their capacity.

This conflict has a practical resolution. The Triadic Agent Architecture (PASS), developed within the same governance framework as the Eight Principles, treats human oversight as event-driven boundary authority rather than continuous involvement. Three AI components (Primary/generator, Auditor/monitor, Steward/integrator) rotate continuously and autonomously using asymmetric information access that prevents any single component from gaming the system. The human Supervisor sits outside this loop and is invoked only when deviation crosses a configurable threshold. This satisfies Article 14’s human oversight requirement without creating the rubber-stamp failure mode, because human judgment is preserved for the moments it actually matters rather than diluted across every routine output. The architecture monitors what the system is actually doing regardless of its declared risk category, which directly addresses the GPAI classification gap where the same model can sit in different risk tiers depending on use case.

3. Bias: Continuous Mitigation vs. Static Compliance

Principle 3 (Bias & the Paradox of Fairness) makes an epistemologically honest claim: no intelligence, human or artificial, can exist without bias. The goal isn’t eliminating bias but continuously assessing and counterbalancing it.

The EU AI Act (Article 10) requires data governance and quality standards for training data, with the implicit assumption that properly curated datasets produce unbiased systems. Conformity assessments check for discrimination at a point in time.

The conflict: The EU treats bias as a data quality problem that can be solved through better inputs and validated through periodic assessment. Principle 3 recognises that bias is structural and emergent. It can’t be eliminated through better data curation alone because the act of choosing what data to curate introduces its own biases. More critically, the EU’s conformity assessment approach checks bias at the moment of market entry. It has no robust mechanism for detecting bias that emerges over time as systems interact with real-world populations and feedback loops develop.

4. Temporal Accountability: Multi-Scale vs. Snapshot

Principle 4 (Time & the Fractal of Decision-Making) insists that AI governance must operate across short-term, mid-term, and long-term timescales simultaneously. Decisions made today must withstand future scrutiny.

The EU AI Act operates on compliance deadlines (February 2025, August 2025, August 2026, August 2027) and point-in-time conformity assessments. Post-market monitoring exists but is primarily incident-driven.

The conflict: The Act’s temporal structure is administrative, not ethical. It tells you when you must be compliant, not how to ensure your system’s decisions remain justifiable across decades. A high-risk AI system can pass its conformity assessment in August 2026, then make decisions over the following ten years that create compounding harms no one audits until a serious incident triggers a review. Principle 4’s multi-scale approach would require something the EU Act doesn’t provide: longitudinal accountability mechanisms that track decision quality across the full lifecycle of consequences, not just the lifecycle of the product.

5. Accountability: Networked vs. Role-Based

Principle 5 (Accountability & the Vanishing Point of Responsibility) identifies a genuine problem: responsibility for AI decisions shifts between creators, operators, and the system itself. Without careful architecture, accountability “disappears into abstraction.”

The EU AI Act addresses this through a value chain approach (Articles 16-25), assigning specific obligations to providers, deployers, importers, and distributors based on their role.

The conflict: The EU’s approach is thorough on paper but creates exactly the “vanishing point” Principle 5 warns about. When a GPAI model provider supplies a foundation model to a downstream deployer who integrates it into a high-risk application, the liability chain becomes murky. The model provider has GPAI obligations under Article 53; the deployer has high-risk obligations. But when harm occurs from an emergent behaviour that neither party specifically designed, the Act’s role-based accountability structure creates gaps where each actor can point to the other. The EU acknowledged this problem by publishing a separate AI Liability Directive proposal, but the very fact that liability required a separate legal instrument suggests the risk classification framework alone can’t solve the accountability question.

6. Freedom & Containment: Dynamic Limits vs. Fixed Categories

Principle 6 (Freedom & the Containment of Power) argues for self-limiting mechanisms, where AI systems can expand capability but contain their own power accumulation. This is about structural containment built into system architecture.

The EU AI Act contains power through external classification: four risk tiers with prescribed obligations. The regulation itself is the containment mechanism. For GPAI, the containment boundary is drawn at 10^25 FLOPs for systemic risk designation, a computational threshold that says nothing about what a model actually does or how it behaves.

The conflict: External containment through regulation has a well-documented failure mode: regulatory capture. When the containment of AI power depends on classification decisions made by regulators who are lobbied by the companies being regulated, the containment mechanism is vulnerable to the very power it’s supposed to contain. Principle 6’s approach, building containment into the systems themselves, is architecturally more robust because it doesn’t depend on the integrity of an external classification process. The EU’s approach depends entirely on that integrity.

7. Adaptation: Living Framework vs. Legislative Amendment

Principle 7 (Adaptation & the Fragility of Fixed Rules) makes the most directly relevant critique of the EU approach: any rule that remains unchanged becomes obsolete when governing rapidly evolving technology.

The EU AI Act has adaptation mechanisms. The Commission can amend Annex III through delegated acts, and regulatory sandboxes provide some flexibility. The Commission is already proposing simplification amendments through its Digital Package.

The conflict: Legislative adaptation is inherently slower than technological change. The EU AI Act took years to negotiate and was arguably outdated before it entered into force. The rise of generative AI and GPAI models required last-minute additions to a framework originally designed around narrow AI systems in specific use cases. The GPAI provisions themselves are evidence of this problem: they were bolted onto the Act late in negotiations because the original 2021 proposal didn’t anticipate the impact of foundation models. The Act’s Annex III list of high-risk applications is essentially a snapshot of what legislators considered dangerous in 2023-2024. New categories of risk that emerge from AI capabilities that didn’t exist during drafting fall through the cracks until the Annex is updated, a process that requires political consensus across 27 member states.

8. Structured Exceptions: The Trickster vs. Rigid Absolutism

Principle 8 (Chaos & the Necessity of the Trickster) is the most provocative: AI must contain the capacity to break its own rules when ethical outcomes demand it, with violations triggering self-correcting oversight.

The EU AI Act has no equivalent concept. Prohibited practices are absolutely prohibited. High-risk obligations are mandatory. There are narrow exceptions for law enforcement but no general framework for ethical rule-breaking.

The conflict: This is perhaps the deepest philosophical divergence. The EU Act assumes that well-designed rules won’t need to be broken. Principle 8 argues that any governance system that can’t accommodate necessary exceptions will either become brittle and fail catastrophically, or be circumvented informally without oversight. Consider a scenario: a high-risk AI system in medical diagnostics detects a pattern that could save lives but doing so requires processing data in a way that violates its conformity assessment parameters. Under the EU Act, the system must not deviate. Under Principle 8, the system would deviate but trigger an automatic review. The EU’s rigidity here may produce worse outcomes precisely because it’s trying to produce better ones.

The Built-In Limitations of the Risk Classification System

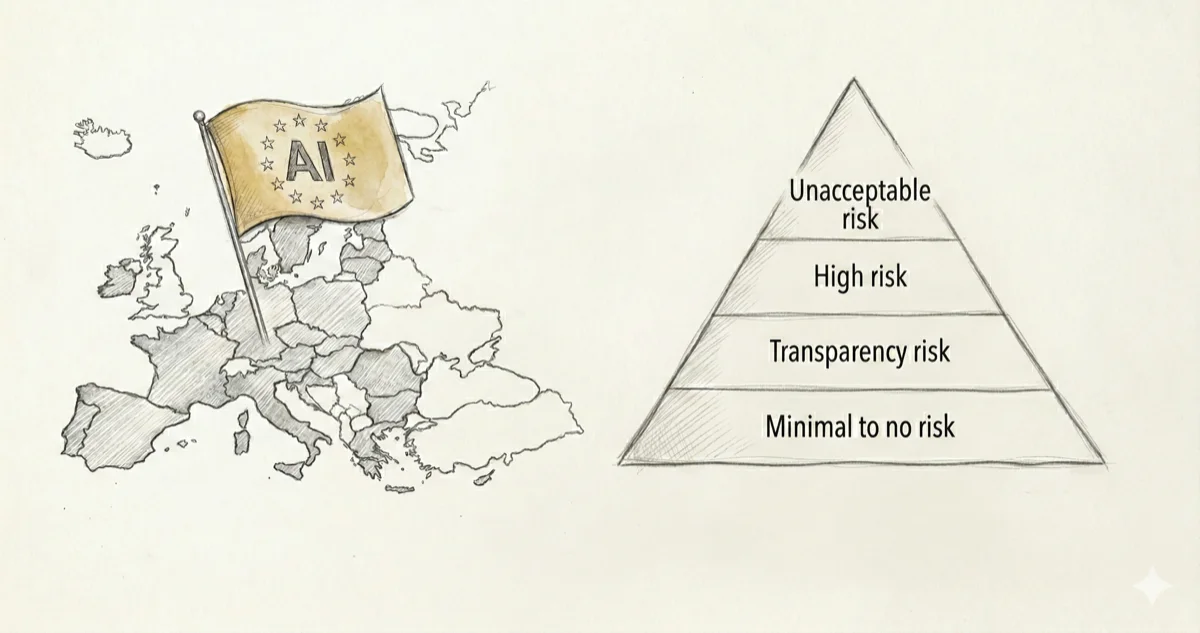

The EU’s four-tier risk approach (unacceptable, high, limited, minimal) is intuitively appealing but contains structural problems that the Eight Principles help illuminate:

The classification gap problem. An appliedAI study of 106 enterprise AI systems found 40% had unclear risk classification. The European Commission estimated 5-15% of systems would be high-risk; industry surveys suggest 33% of AI startups believe their systems qualify. When the regulated parties can’t determine which tier they fall into, the classification system has a legibility problem that undermines its purpose.

The GPAI use-case dependency trap. Risk classification is based on intended purpose, not capability. A GPAI model that is minimal-risk as a writing assistant becomes high-risk when deployed for credit scoring, yet it’s the same model with the same capabilities and the same potential for emergent harm. The Eight Principles treat risk as inherent to capability and interaction patterns, not to declared use cases. The EU’s approach allows the same underlying GPAI system to be effectively unregulated in one context and heavily regulated in another, creating an obvious gaming strategy: deploy powerful AI under low-risk use-case descriptions, then expand functionality incrementally.

The compliance-as-safety illusion. The EU Act’s conformity assessments can create a false sense of security. A system that passes its assessment is “compliant” but not necessarily “safe.” The Eight Principles distinguish between these concepts. Compliance with external rules and genuine alignment with ethical outcomes are different things, and optimising for one doesn’t guarantee the other. When organisations focus resources on passing conformity assessments rather than building genuinely safe systems, the regulation produces compliance theatre rather than safety.

The innovation asymmetry. The Act’s obligations fall disproportionately on providers of high-risk systems, creating a competitive advantage for minimal-risk deployments and for actors outside EU jurisdiction. This may push innovation toward the least regulated categories (where oversight is lightest) and toward non-EU jurisdictions (where the Act doesn’t apply directly). The intention is to make AI safer; the outcome may be to make EU-developed AI more heavily documented while leaving the AI that EU citizens actually interact with largely unaffected.

The static snapshot problem. Risk tiers are determined at market entry. But AI systems change through fine-tuning, through interaction with users, through the accumulation of data that shifts model behaviour over time. A GPAI model classified as below the systemic risk threshold at deployment can be fine-tuned, combined with retrieval systems, or scaled through inference-time compute in ways that effectively change its risk profile without triggering reclassification. The Act’s post-market monitoring provisions don’t adequately address this because they’re designed to catch incidents, not drift.

The minimal-risk blind spot. The vast majority of AI systems fall into the minimal-risk category with essentially no regulatory obligations. But collectively, minimal-risk systems (recommendation algorithms, content curation, personalisation engines, GPAI-powered chatbots) shape public discourse, consumer behaviour, and cognitive patterns on a massive scale. The Eight Principles (particularly Principles 3, 6, and 8) would treat these collective effects as governance concerns. The EU Act treats them as essentially unregulated.

The Core Tension

The EU AI Act is good regulation in the traditional sense. It creates legal certainty, establishes enforcement mechanisms, and provides a shared vocabulary for discussing AI risk across 27 member states. These are genuine achievements.

But the Eight Principles expose a deeper question the Act doesn’t address: Can a static classification system govern a technology whose defining characteristic is that it changes?

The Act’s risk tiers assume that AI systems, including GPAI models whose capabilities are emergent and context-dependent, can be meaningfully categorised into fixed buckets. The Eight Principles argue that AI governance must be as dynamic, self-correcting, and paradox-aware as the systems it governs. The EU chose enforceability over adaptability. That’s a reasonable trade-off for first-generation regulation, but one whose limitations will become increasingly visible as AI capabilities continue to evolve faster than legislative processes can follow.

The intention behind the EU AI Act is sound: protect fundamental rights while enabling innovation. The outcome may be different: a compliance infrastructure that captures foreseeable risks while systematically missing emergent ones, that regulates declared use-cases while leaving actual GPAI capabilities unconstrained, and that creates legal certainty at the cost of the adaptive governance that AI’s pace of change actually demands. Architectures like PASS suggest a direction. Not replacing regulation, but building the kind of structural self-monitoring that regulation should be demanding rather than leaving to chance.

Note on the Commission Guidelines (July 2025):

On 29 July 2025, the European Commission published Guidelines on the definition of an AI system (C(2025) 5053 final). These Guidelines define seven elements that determine whether a system qualifies as an AI system under Article 3(1), and explicitly exclude basic data processing, classical heuristics, and simple prediction systems from scope. Notably, paragraph 63 confirms that “the vast majority of systems, even if they qualify as AI systems, will not be subject to any regulatory requirements under the AI Act.” Paragraph 64 places GPAI models outside the scope of the definition guidelines entirely, deferring to Chapter V without clarifying the boundary between AI systems and GPAI models. Both positions reinforce the arguments made in this analysis: the minimal-risk blind spot remains by design, and the GPAI classification ambiguity persists at the Commission’s own level.

Analysis prepared February 2026. Based on comparison of “The Eight Core Principles of AI Governance & Ethics” with EU Regulation 2024/1689 (AI Act) as implemented through February 2026, with reference to the Triadica Agent Architecture (PASS) V1.1.